AWS Robomaker elevates the ROS by including Amazon's cloud services, get started on learning all about it here!

Robotics is a sophisticated interdisciplinary field involving intricate hardware design, complex software stacks, and high-fidelity communication between them. An ideal robot software stack should be modular, decentralized, manageable, and extensible while still supporting seamless integration with the hardware. Given the volatility in robot software demands and performance, it is extremely challenging to set up an ideal development environment conducive to support speedy code development, testing, deployment, and maintenance while also staying adaptable to the evolving hardware.

To address these issues in today’s industry, companies have been working on more ubiquitous solutions. This article explains how Amazon Web Services (AWS) RoboMaker was conceived to solve the hindrances that limit robots from becoming a household commodity. It also explains the concept of AWS RoboMaker, its significance, and how to get started with a sample robot application.

What is AWS RoboMaker?

Robot Operating System (ROS) is a widely used open-source robotics middleware platform that allows offline robot development. Similar to all other middlewares, ROS has limited portability, setup, package management, and deployment.

Note: AWS provides several cloud-based services and RoboMaker is one of them. Running a service may also run several other services under the hood. While all users are provided with some basic free consumption limit for all services; you should turn off these services when not in use to avoid incurring costs as you can easily exceed the free limits within a couple of days.

AWS RoboMaker provides a cloud instance of the ROS middleware with a simple interface analogous to some common API. It has a browser-based, full-fledged IDE called Cloud9 pre-configured with ROS. It also has a ROS build tool to set up the software for different hardware platforms.

The browser-based editor supports multiple programming languages with auto-complete for the ROS packages. RoboMaker provides an enhanced robot simulation and testing platform with support for parallel instances and in different deployment environments and scenes.

AWS RoboMaker attempts to create a holistic environment for robot development, testing, and deployment, all available in the cloud that can be scaled to production. It abstracts the painstaking process of setting up the software and package installations, platform incompatibilities, and dependency management for different third-party tools. A user only needs to connect the hardware to the AWS RoboMaker and nothing more.

The AWS RoboMaker simulation software has support for Gazebo, Rviz, and rqt. RoboMaker adds Amazon CloudWatch and Amazon S3 for data monitoring and logging in the simulation environment itself. This data could be robot motion information, sensor data, reaction times, and other hardware and software metrics. All such features make RoboMaker a very useful tool for individuals and companies alike to get started with their complete robot product while avoiding the hassles of the infrastructural setup.

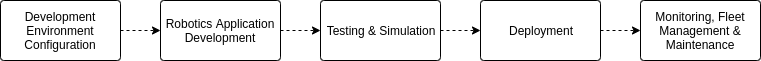

RoboMaker Application Development Cycle

RoboMaker provides several off-the-shelf code samples for robot jobs and/or simulation tasks in prebuilt world scenarios. Fleet management and monitoring are tightly coupled to the deployment process in RoboMaker. Amazon services including object/face detection, data logging, speech-to-text, and media analysis are wrapped into ROS packages readily available for integration into robot applications. On-field code and robot maintenance, crash reports, regression testing, and data aggregation are additional services AWS RoboMaker provides for the different robot deployments.

Simulation in AWS RoboMaker

AWS RoboMaker supports simulation platforms capable of rigorous robot application software testing without the need for exemplary hardware (computational needs are handled in the cloud) and manual monitoring. It can be used to create a realistic virtual representation of the operational environment and mimic hardware aspects like failures, sensing anomalies, and operation.

Rviz Simulation Environment in AWS RoboMaker

Physical aspects like kinematics and dynamics in robotic systems, their collisions, and terrain interactions can also be simulated in AWS RoboMaker. Enhanced simulation capabilities allow developers to transfer robot applications directly from virtual robot model to the real-world model.

Running a Sample AWS RoboMaker Application

To access AWS RoboMaker and explore its possibilities:

- Create a free account at AWS - Cloud Computing Services. You can use all the AWS cloud-based services available using this one account.

- Go to the AWS console and select RoboMaker from the list of services after logging in.

- In the console panel on the left, select Try Sample Applications.

Select Sample Applications

4. From the list of available sample applications, select the “Self-Driving using Reinforcement Learning” option and click Launch simulation job.

List of Sample Applications in AWS RoboMaker

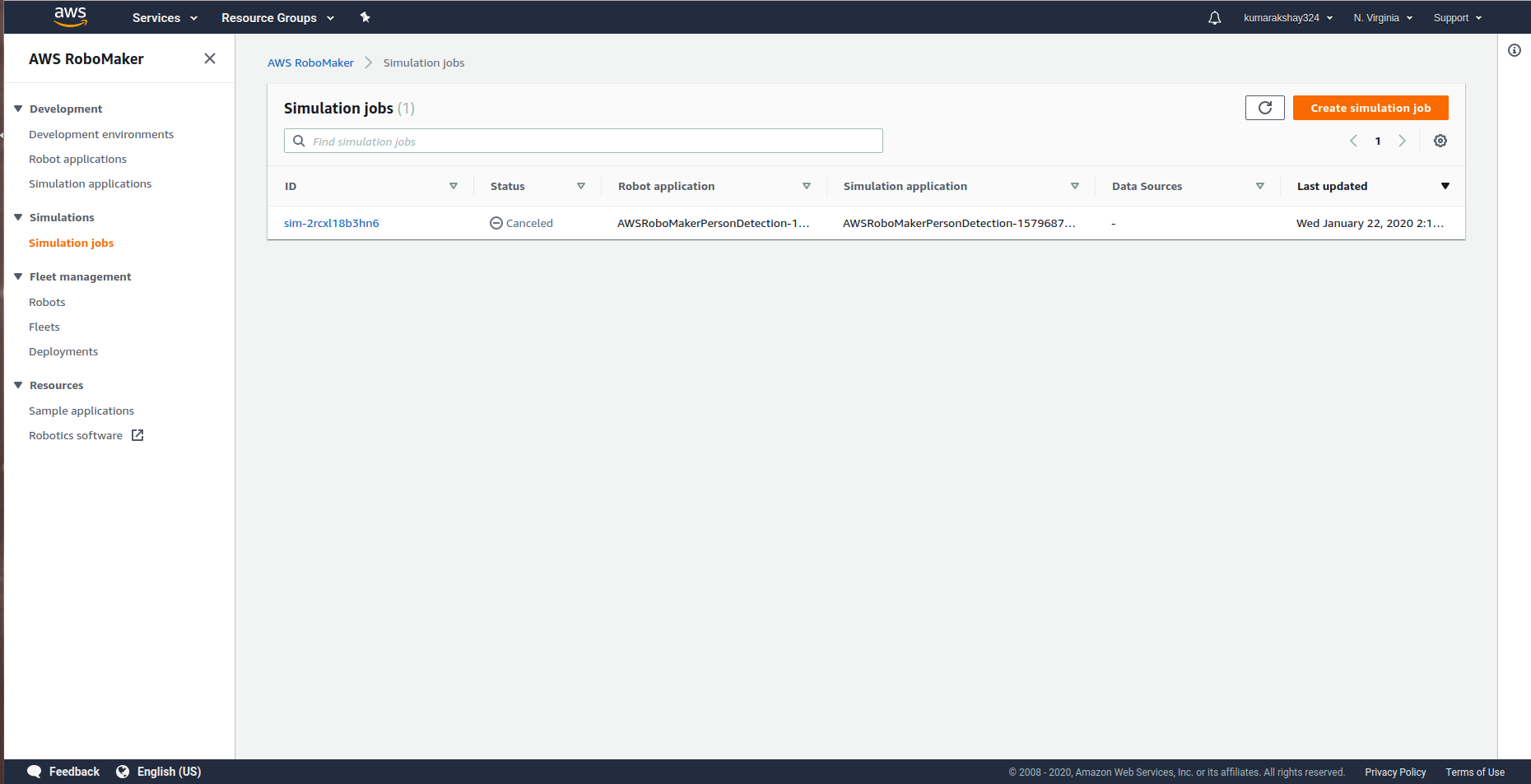

5. Wait for the resources required for the application to load. Once loaded, the status of the simulation application sets to Running.

Active Simulation Job Dashboard

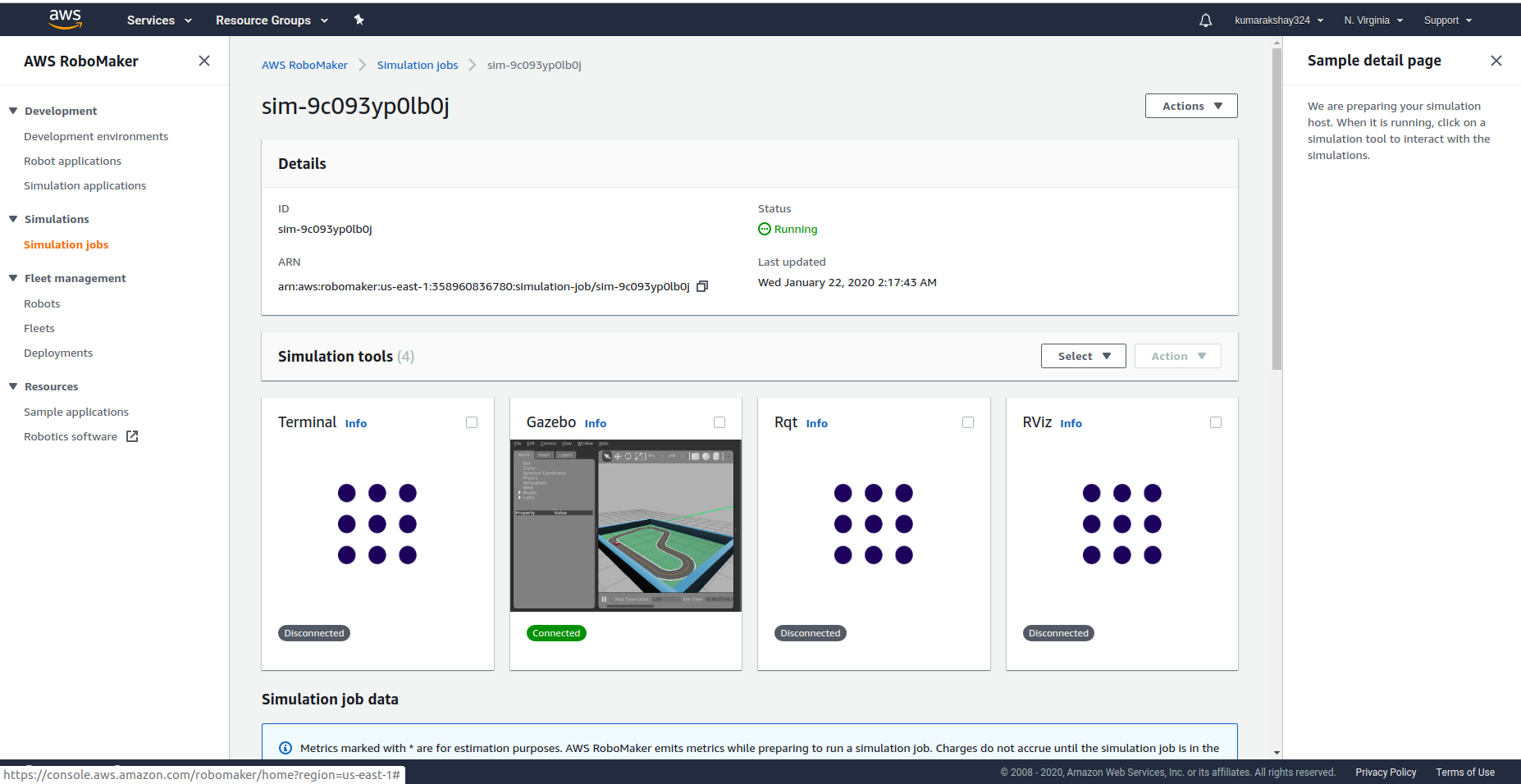

6. Click on any of the simulation tools to visualize an operational racecar running on reinforcement learning algorithms.

Gazebo Simulation Environment

7. Scroll down to explore the different compute usage metrics, memory use, application configuration, application ROS package name, ROS launch file running, and more.

Visualization of Different Metrics

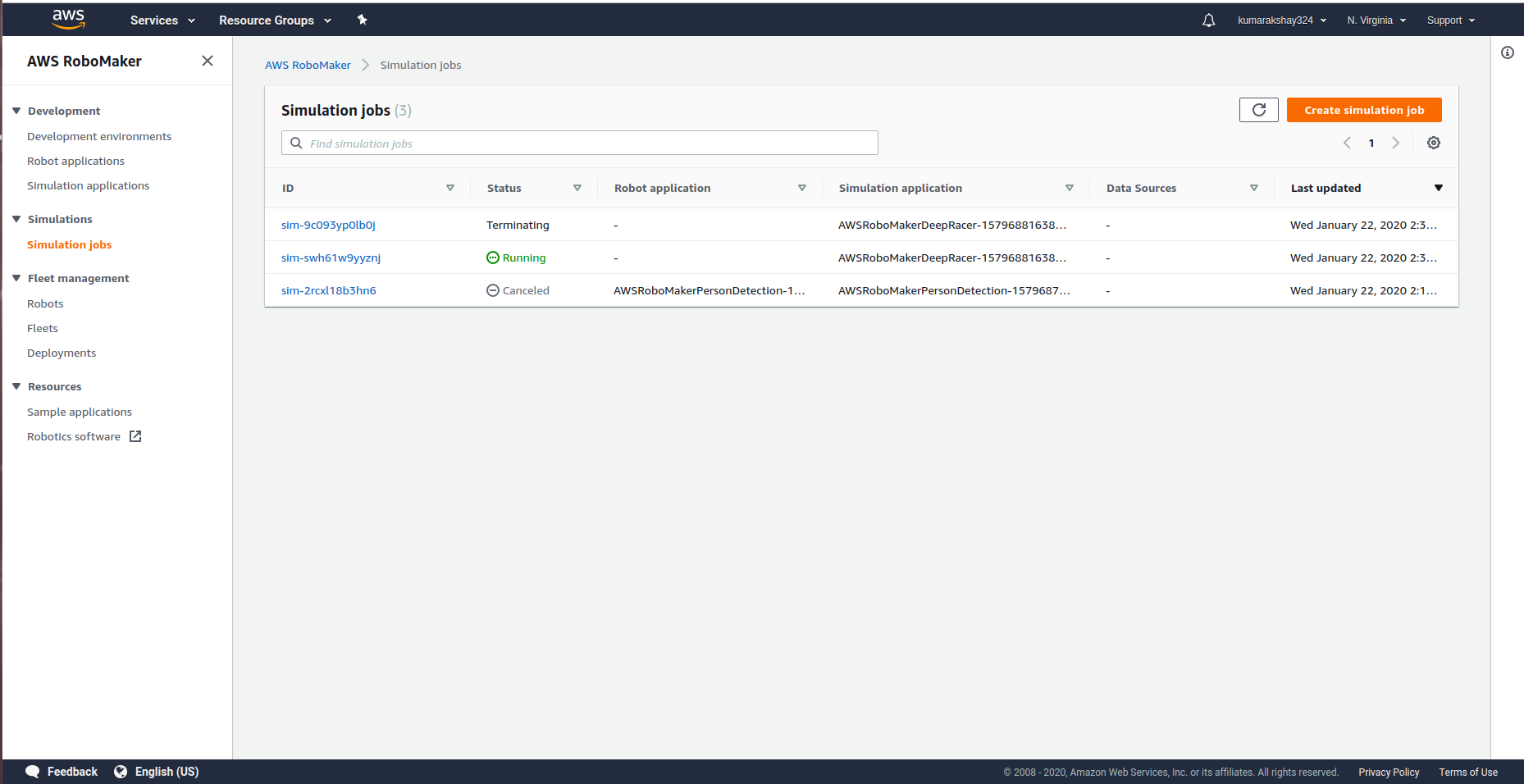

8. You can run multiple simulation jobs following these steps. Each of them runs independently while allowing for simultaneous monitoring and analysis. The dashboard shows the list of jobs with their current status. Simulations can be run for a maximum of 14 days uninterrupted, which makes simulation or hardware-in-the-loop testing of AI-based robot applications very convenient and hassle-free.

Simulation Jobs’ Dashboard

Next Steps with AWS RoboMaker

The convenience of robot software development without investing in the infrastructure and management efforts makes rapid development and testing possible. AWS RoboMaker has laid the foundation for the integration of RaaS (robotics-as-a-service) and SaaS (software-as-a-service).

Given how easy it is to run a sample application, which is essentially a common ROS package, the appropriate next steps should be to simulate a simple robot arm using a custom-defined URDF model and applying basic kinematics and dynamics simulations on it.